“1 million context?” eh? The longer your agent runs, the dumber it gets

The longer you use your agent, the dumber it gets.

Why? Context rot.

The fine folks at OpenAI just dropped another heater with GPT-5.4, which I personally think kicks ass. AND it shipped with a 1M token context window.

This is a massive amount of context, a problem I posted about a couple of days ago when it comes to autonomous workers not having enough of it.

So, sounds great for autonomous agents right? Load up the whole codebase, the full customer history, every document.

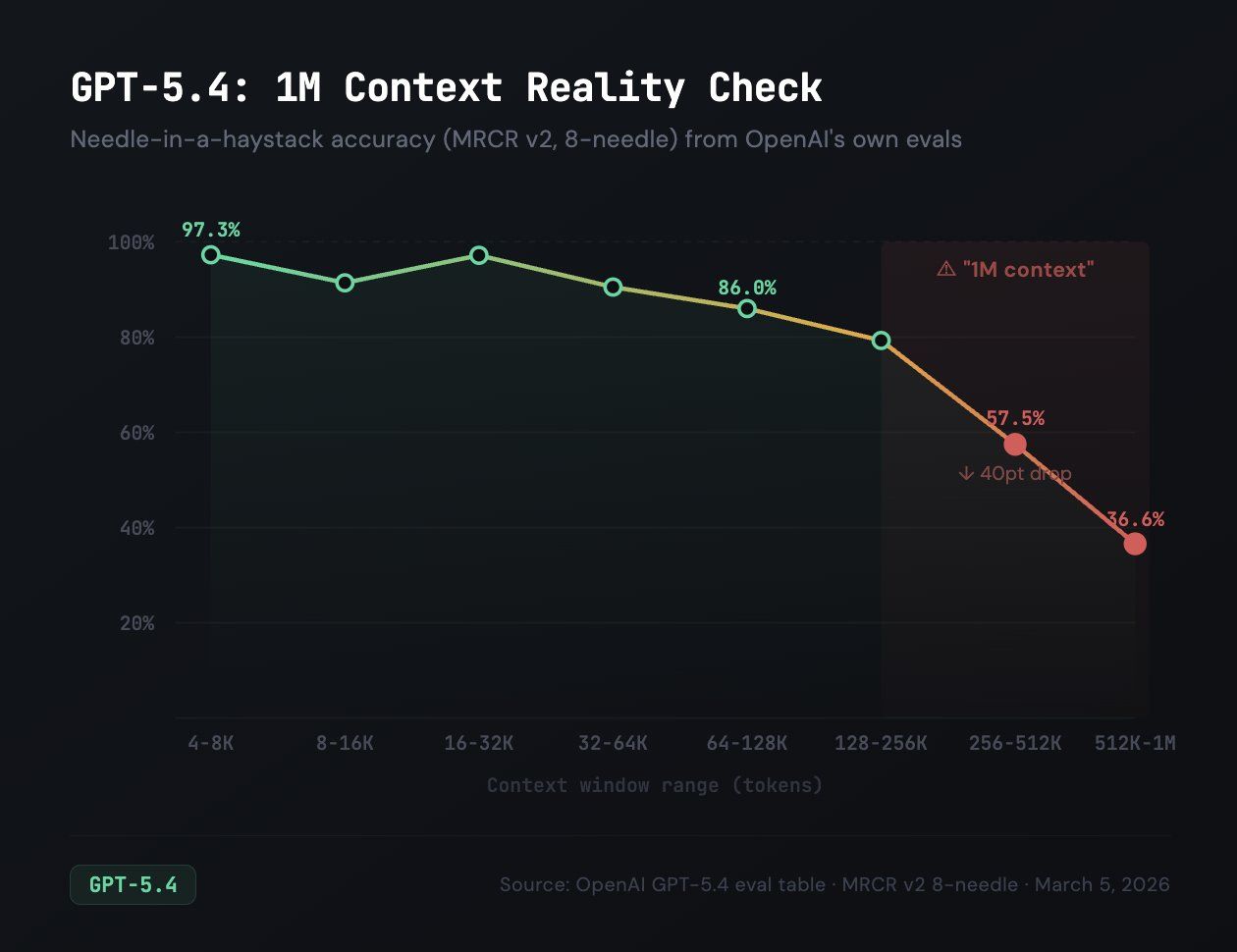

One problem tho. OpenAI’s own evals show retrieval accuracy drops from 97% at 32K tokens to 36% in the 512K-1M range.

Yeah, that’s right. At full context, the model can’t find the right information nearly two-thirds of the time.

Chroma Research tested 18 LLMs and found the same thing. The more tokens you stuff in, the worse the model gets at attending to any specific piece of it.

Note that this isn’t a bug in one model.

This is how transformer attention works. The relationships the model has to track grow quadratically (each 10x in tokens is 100x in relationships):

-> 100 million pairwise relationships at 10K tokens,

-> 10 billion at 100K

-> 1 trillion at 1M tokens of context

I’ve been building autonomous worker agents for weeks and this is what I keep coming back to.

An agent that forgets 64% of what it’s read isn’t a genius with photographic memory. It’s the new hire who skimmed the onboarding docs.

(see what I did there?)

Your agent burns through context fast. Just loading the framework (identity, tools, memory, skills, the actual task) eats a real chunk before any work starts. Then the exploring, reading, reasoning, and coding pile on.

By the time the agent is deep into a project, it’s swimming in tokens and dropping instructions at random.

If you’re building agentic workflows in B2B and letting them run on long contexts without a strategy, they are silently getting worse.

In my experience they won’t crash or throw errors (they might, but usually not). They’re just forgetting things.

The operational sweet spot is roughly 16K-272K tokens. That’s where you get 97% accuracy and stay under GPT-5.4’s pricing cliff, where input costs double past 272K anyway. And like Anthropic they bill the entire session at the higher rate once you extend the context window. Not just the overage.

What works for me so far: context compaction, chunked retrieval, structured memory, regular pruning. Feed the model what it needs for the current task, not everything you have.

1M context is a spec sheet number. Build your agents for a much smaller one and your task accuracy will skyrocket.